March 14, 2013

In a recent article by Gail Overton of Laser Focus World, the computational imager from Duke Scientists published in Science "Metamaterial Apertures for Computational Imaging" is discussed:

By Gail Overton

Senior Editor

"Although it currently operates in the microwave region, a metamaterial-based computational imager from scientists at Duke University (Durham, NC) and the University of California–San Diego (UCSD; La Jolla, CA) could be engineered to operate at infrared (IR) and other photonic wavelengths.1 What’s more, it eliminates the typical physical components of an imaging system, including lenses and associated scanning or moving parts. The guided-wave metamaterial aperture achieves two-dimensional (2D; one angular coordinate and range) image acquisition at 10 frames/s using frequencies over the 18 to 26 GHz range. A physical layer compression ratio of 40:1 is demonstrated.

Computational/compressive imaging

In conventional imaging, a lens can be thought of as forming measurement modes that map all parts of a scene to a detector plane. Each mode contributes specific, localized information (such as intensity, phase, angle, and distance to the detector), with all modes being captured simultaneously by a CCD, CMOS, or other detector array.

Alternatively, single-pixel imagers can capture scene information sequentially by scanning mode patterns over the scene; for example, a single-pixel imager developed at Rice University (Houston, TX) makes use of a random mask and two lenses to project incoherent modes onto a scene; the scene is then recovered from the set of measurements using emerging computational imaging algorithms.

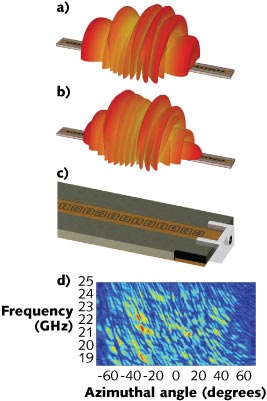

In this most recent Duke/UCSD demonstration, a microwave metamaterial imager is formed using a planar waveguide that feeds microwave energy to an array of resonant, metamaterial elements called electric-inductor-capacitors (ELCs). These create a 1D aperture and remove the need for lenses and scanning. In the device, the resonance frequencies of the ELCs are distributed randomly over the 18 to 26 GHz bandwidth. The waveguide thus acts as a coherent single-pixel device, with the ELC array producing a set of complex spatial modes whose patterns vary as a function of the excitation frequency.

The metamaterial aperture

Basically consisting of a leaky waveguide, the 1D metamaterial aperture is formed by patterning the top conductor of a standard microstrip line with complementary ELCs. The geometry of the ELCs along the microstrip can be controlled in order to affect the scattered-field characteristics such as amplitude and phase of the transmitted wave, enabling true engineering of any desired far-field mode. For resonant metamaterials, frequency is the logical choice for indexing the modes. Sweeping the frequency of the illuminating signal across the available bandwidth accesses varying mode patterns sequentially without having to move or reconfigure the aperture.

Simulated far-field profiles are shown for the metamaterial imager at 18.5 GHz (a) and 21.8 GHz (b). These resonant microwave metamaterial apertures (c) can be engineered to create any imaging mode. A measurement matrix (d) shows frequency information as a function of angle of the mode data. (Courtesy of Duke University)

Though demonstrated at microwave frequencies, a similar computational/compressive imager could be developed at terahertz or IR wavelengths. The group at Duke is currently engaged in evaluating similar imaging devices for the near- and far-IR bands, where large detector arrays can be expensive. The elements of the metamaterial imager—guided-wave structure and metamaterial layer—can be transitioned to shorter wavelengths using fabrication approaches common to photonic systems.

Illuminated by a low-directivity horn antenna, backscattered radiation from objects in a scene fills a 40-cm-long metamaterial aperture fabricated to operate in the 18 to 26 GHz range. In one experiment, a 4 × 4 × 3 m chamber contained several 10-cm-diameter scattering retroreflectors to achieve this. The aperture admits only one specific mode at each measured frequency. In the experimental measurements, a 1.7º diffraction-limited angular resolution and 4.6 cm bandwidth-limited range resolution were achieved. The measurement contained only 101 values, implying a compression ratio of 40:1 in a 100 ms acquisition timeframe.

'The theoretical approach of compressive imaging suggests that you can undersample a scene, yet still recover full diffraction-limited information,' says Duke professor David Smith. 'This experiment indicates that the metamaterial architecture is incredibly well-suited to serve as the hardware implementation for compressive and other computational imaging schemes.' "

REFERENCE

1. J. Hunt et al., Science, 339, 310 (Jan. 18, 2013).

For the full article from Laser Focus World, please click here